Tis the season for a squeezin’

Contemporary research challenges prevailing idea that AI needs huge datasets to resolve considerations.

A pair of Carnegie Mellon University researchers currently chanced on hints that the strategy of compressing recordsdata can resolve advanced reasoning initiatives with out pre-coaching on heaps of examples. Their machine tackles some styles of abstract pattern-matching initiatives the usage of easiest the puzzles themselves, robust dilapidated wisdom about how machine-studying programs build enviornment-solving abilities.

“Can lossless information compression by itself produce intelligent behavior?” quiz Isaac Liaoa first-twelve months PhD student, and his consultant, Professor Albert Gu, from CMU’s Machine Finding out Division. Their work suggests the acknowledge might perchance per chance well perchance very well be yes. To expose, they created CompressARC and published the finally ends up in a total put up on Liao’s web situation.

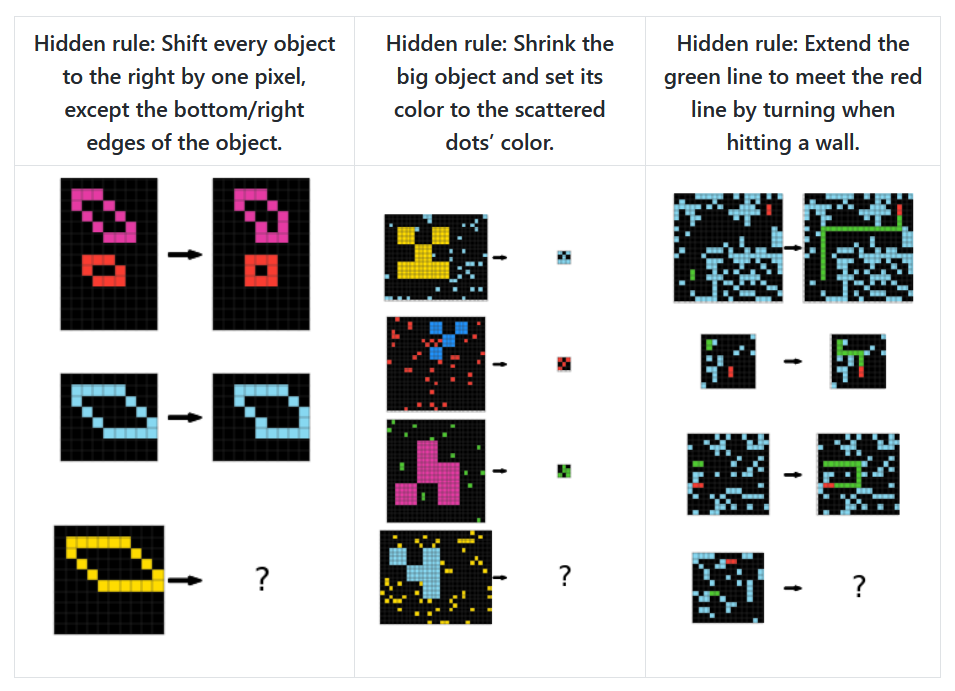

The pair examined their formulation on the Abstraction and Reasoning Corpus (ARC-AGI), an unbeaten visible benchmark created in 2019 by machine-studying researcher François Chollet to study AI programs’ abstract reasoning skills. ARC provides programs with grid-primarily based image puzzles the assign every provides several examples demonstrating an underlying rule, and the machine must infer that rule to apply it to a brand new instance.

As an instance, one ARC-AGI puzzle reveals a grid with gentle-blue rows and columns dividing the home into containers. The task requires knowing which colours belong wherein containers in accordance with their residing: dark for corners, magenta for the center, and directional colours (crimson for up, blue for down, inexperienced for appropriate, and yellow for left) for the final be aware containers. Listed below are three assorted instance ARC-AGI puzzles, taken from Liao’s web situation:

Three instance ARC-AGI benchmarking puzzles. Credit: Isaac Liao / Albert Gu

The puzzles take a look at capabilities that some consultants mediate might perchance per chance well perchance very well be main to frequent human-delight in reasoning (typically called “AGI” for synthetic frequent intelligence). These properties embody belief object persistence, aim-directed habits, counting, and frequent geometry with out requiring in fact knowledgeable recordsdata. The average human solves 76.2 p.c of the ARC-AGI puzzles, whereas human consultants reach 98.5 p.c.

Openai”https://arstechnica.com/information-technology/2024/12/openai-announces-o3-and-o3-mini-its-next-simulated-reasoning-models/”>made waves in December for the claim that its o3 simulated reasoning mannequin earned a file-breaking ranking on the ARC-AGI benchmark. In checking out with computational limits, o3 scored 75.7 p.c on the take a look at, whereas in excessive-compute checking out (in general limitless pondering time), it reached 87.5 p.c, which OpenAI says is equivalent to human performance.

CompressARC achieves 34.75 p.c accuracy on the ARC-AGI coaching residing (the sequence of puzzles historic to manufacture the machine) and 20 p.c on the evaluation residing (a separate team of unseen puzzles historic to study how well the formulation generalizes to new considerations). Every puzzle takes about 20 minutes to process on an particular individual-grade RTX 4070 GPU, in contrast to top-performing methods that notify heavy-responsibility data center-grade machines and what the researchers characterize as “astronomical amounts of compute.”

No longer your identical old AI formulation

CompressARC takes a truly assorted formulation than most show AI programs. Barely than relying on pre-coaching—the process the assign machine-studying items be taught from huge datasets sooner than tackling suppose initiatives—it in fact works and not using a external coaching data whatsoever. The machine trains itself in true-time the usage of easiest the suppose puzzle it needs to resolve.

“No pretraining; models are randomly initialized and trained during inference time. No dataset; one model trains on just the target ARC-AGI puzzle and outputs one answer,” the researchers write, describing their strict constraints.

When the researchers recount “No search,” they’re referring to 1 other frequent formulation in AI enviornment-solving the assign programs are attempting many assorted imaginable alternatives and opt the most classic one. Search algorithms work by systematically exploring alternatives—delight in a chess program evaluating thousands of imaginable strikes—in preference to at as soon as studying a resolution. CompressARC avoids this trial-and-error formulation, relying fully on gradient descent—a mathematical formulation that incrementally adjusts the network’s parameters to lower errors, equivalent to how you’d rep the backside of a valley by continuously walking downhill.

The machine’s core principle makes notify of compression—discovering the most classic formulation to symbolize recordsdata by identifying patterns and regularities—as the riding power on the again of intelligence. CompressARC searches for the shortest imaginable description of a puzzle that can accurately reproduce the examples and the resolution when unpacked.

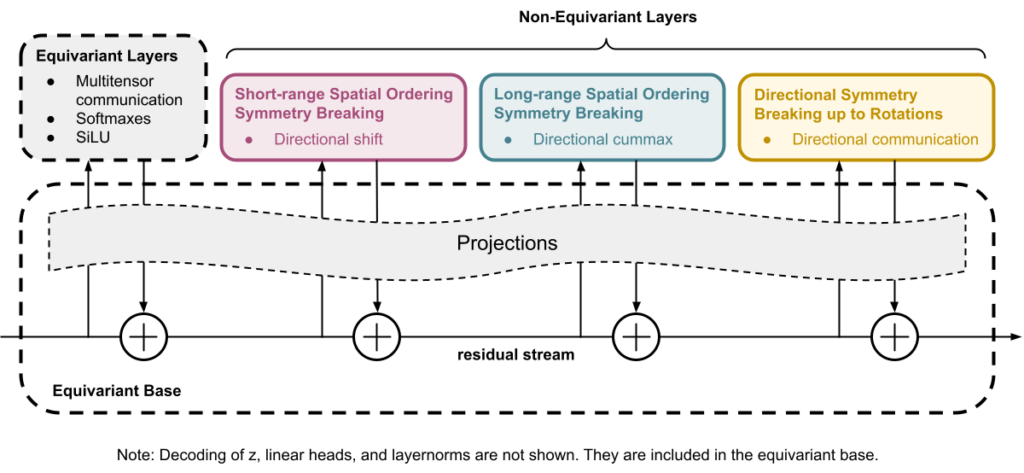

Whereas CompressARC borrows some structural principles from transformers (delight in the usage of a residual circulate with representations that are operated upon), it be a custom neural network architecture designed particularly for this compression task. It is now not in accordance with an LLM or identical old transformer mannequin.

No longer like identical old machine-studying methods, CompressARC makes notify of its neural network easiest as a decoder. In some unspecified time in the future of encoding (the strategy of converting recordsdata right into a compressed layout), the machine beautiful-tunes the network’s inside of settings and the info fed into it, incessantly making cramped adjustments to lower errors. This creates the most compressed illustration whereas accurately reproducing known parts of the puzzle. These optimized parameters then change into the compressed illustration that stores the puzzle and its resolution in an atmosphere friendly layout.

An bright GIF showing the multi-step strategy of CompressARC solving an ARC-AGI puzzle. Credit: Isaac Liao

“The key challenge is to obtain this compact representation without needing the answers as inputs,” the researchers show. The machine in fact makes notify of compression as a make of inference.

This formulation might perchance per chance well perchance show camouflage precious in domains the assign immense datasets create now not exist or when programs must be taught new initiatives with minimal examples. The work suggests that some styles of intelligence might perchance per chance well perchance emerge now not from memorizing patterns all the plot by huge datasets, but from efficiently representing recordsdata in compact varieties.

The compression-intelligence connection

The doable connection between compression and intelligence might perchance per chance well perchance sound uncommon initially gaze, but it with out a doubt has deep theoretical roots in computer science concepts delight in Kolmogorov complexity (the shortest program that produces a specified output) and Solomonoff induction—a theoretical gold identical old for prediction equivalent to an optimum compression algorithm.

To compress recordsdata efficiently, a machine must acknowledge patterns, rep regularities, and “understand” the underlying structure of the info—abilities that reproduction what many take into accounts shimmering habits. A machine that can predict what comes subsequent in a sequence can compress that sequence efficiently. Consequently, some computer scientists over the decades delight in advised that compression might perchance per chance well perchance very well be equivalent to frequent intelligence. In accordance to these principles, the Hutter Prize has offered awards to researchers who can compress a 1GB file to the smallest measurement.

We beforehand wrote about intelligence and compression in September 2023, when a DeepMind paper chanced on that immense language items can infrequently outperform in fact knowledgeable compression algorithms. In that judge, researchers chanced on that DeepMind’s Chinchilla 70B mannequin might perchance per chance well perchance compress image patches to 43.4 p.c of their long-established measurement (beating PNG’s 58.5 p.c) and audio samples to appropriate 16.4 p.c (outperforming FLAC’s 30.3 p.c).

That 2023 research advised a deep connection between compression and intelligence—the idea that that in fact belief patterns in data enables more atmosphere friendly compression, which aligns with this new CMU research. Whereas DeepMind demonstrated compression capabilities in an already-trained mannequin, Liao and Gu’s work takes a obvious formulation by showing that the compression process can generate shimmering habits from scratch.

This new research issues which ability of it challenges the existing wisdom in AI pattern, which generally relies on huge pre-coaching datasets and computationally dear items. Whereas main AI corporations push toward ever-higher items trained on more extensive datasets, CompressARC suggests intelligence rising from a basically assorted principle.

“CompressARC’s intelligence emerges not from pretraining, vast datasets, exhaustive search, or massive compute—but from compression,” the researchers attain. “We challenge the conventional reliance on extensive pretraining and data and propose a future where tailored compressive objectives and efficient inference-time computation work together to extract deep intelligence from minimal input.”

Obstacles and attempting ahead

Even with its successes, Liao and Gu’s machine comes with determined limitations that can advised skepticism. Whereas it successfully solves puzzles fascinating shade assignments, infilling, cropping, and identifying adjoining pixels, it struggles with initiatives requiring counting, lengthy-vary pattern recognition, rotations, reflections, or simulating agent habits. These limitations highlight areas the assign uncomplicated compression principles might perchance per chance well perchance now not be sufficient.

The research has now not been see-reviewed, and the 20 p.c accuracy on unseen puzzles, even when indispensable with out pre-coaching, falls critically beneath both human performance and top AI programs. Critics might perchance per chance well perchance argue that CompressARC is in all probability to be exploiting suppose structural patterns in the ARC puzzles which might perchance per chance well perchance now not generalize to assorted domains, robust whether compression alone can abet as a basis for broader intelligence in preference to appropriate being one element among many required for robust reasoning capabilities.

And yet as AI pattern continues its rapid strategy, if CompressARC holds up to extra scrutiny, it provides a look of a imaginable different direction which might perchance per chance well perchance consequence in helpful shimmering habits with out the useful resource requires of as of late’s dominant approaches. Or a minimum of, it could in all probability in all probability well free up a truly critical element of frequent intelligence in machines, which is mute poorly understood.

Benj Edwards is Ars Technica’s Senior AI Reporter and founding father of the location’s devoted AI beat in 2022. He’s moreover a tech historian with almost two decades of ride. In his free time, he writes and records tune, collects vintage computers, and enjoys nature. He lives in Raleigh, NC.